AI avatar software development: Choosing the right solution for your business

AI avatars, also called digital humans, are moving from demos into production. Teams across support, training, healthcare, and product onboarding are piloting visual, conversational agents that can talk, listen, and emote.

This guide explains how AI avatar software development works, compares three leading solutions — FBX-based pipelines, HeyGen Realtime Avatars, and NVIDIA ACE — and helps technical and product leaders choose the right fit for their use case, constraints, and roadmap.

Need an AI avatar solution that works in the real world? Globaldev helps businesses design and build scalable digital human experiences tailored to their users and workflows.

What is an AI avatar (digital human)?

An AI avatar is a visual, interactive agent powered by conversational AI. It blends language understanding, dialogue management, speech synthesis, computer vision, and real-time rendering so users can communicate naturally through voice and video.

In practice, “AI avatar software development” spans more than the face on screen: it is the end-to-end system that detects speech, reasons over knowledge, speaks back with a believable voice, and animates an on-brand character with lip sync and expressions.

For this article, we use “AI avatar,” “digital human,” and “AI spokesperson” interchangeably, focusing on production-grade experiences rather than one-off demo videos.

The rising popularity of AI avatars

The adoption of AI avatars is rapidly accelerating across various industries. This surge in popularity can be attributed to several factors:

- Enhanced user engagement: AI avatars provide a more engaging and personalized experience compared to traditional interfaces. They can interact with users in real time, responding to queries and assisting naturally and intuitively. According to a report by Grand View Research, the global virtual avatar market size was valued at USD 10.03 billion in 2022 and is expected to expand at a compound annual growth rate (CAGR) of 45.7% from 2023 to 2030. This highlights the growing demand for interactive and personalized digital experiences.

- Cost efficiency: While initial development costs can vary, AI avatars can streamline operations and reduce long-term expenses by automating repetitive tasks and providing 24/7 support.A study by Juniper Research indicates that AI-powered customer service, including virtual assistants and avatars, could save businesses $11 billion annually by 2023. This is due to reduced labor costs and improved efficiency.

- Technological advancements: The continuous development of AI, machine learning, and computer graphics have significantly improved the realistic AI avatar design and functionality of AI avatars, making them more appealing for commercial applications. The advancements in neural rendering and generative AI are driving this growth.

- Telemedicine and healthcare: The healthtech AI avatar solutions field is seeing major growth. AI avatars are now being used for remote patient consultations, providing medical advice, and offering emotional support. This has become even more important with the growing need for remote healthcare. You can build AI avatars for telemedicine to enhance patient engagement and accessibility. A report from MarketsandMarkets predicts that the global telehealth market is projected to reach $431.8 billion by 2027, driven in part by the use of AI-powered virtual assistants.

- Customer service and support: AI avatars are increasingly used to handle customer inquiries, provide product information, and offer technical support. This allows businesses to provide instant assistance to customers, improving satisfaction and loyalty.

- Marketing and advertising: Brands are leveraging AI avatars to create engaging and personalized marketing campaigns. AI avatars can act as virtual influencers, brand ambassadors, and product demonstrators, enhancing brand awareness and driving sales. The virtual influencer market alone is estimated to be worth billions, with brands investing heavily in digital avatars for marketing.

- Training and education: AI avatars can be used to create interactive training simulations and educational content, providing personalized learning experiences for students and employees.

- AI video generators are becoming more and more popular, as they allow the creation of high-quality video content with AI Avatars. The AI video generation market is rapidly growing, with a projected market size of multi-billions in the coming years.

How AI avatar software works: a reference architecture

Most avatar systems follow a similar flow from user input to animated response:

- Input capture. Audio is captured from the browser or mobile device. For real-time interactions, media streams via WebRTC.

- Speech-to-Text (STT). Audio is transcribed using cloud or on-prem ASR/STT engines, depending on latency and privacy needs.

- Conversation and reasoning. An orchestration layer routes the transcript to a dialogue manager or LLM. RAG pipelines ground responses in enterprise data; business logic and API integrations are invoked here.

- Text-to-Speech (TTS). The response is synthesized to speech. Advanced TTS supports visemes or phonemes to drive lip sync and can render distinct speaking styles or emotions.

- Animation and rendering. A 2D or 3D character animates in sync with audio — via a real-time engine (Unreal, Unity, WebGL/WebGPU) or a cloud service that generates and streams visuals.

- Delivery and analytics. The response is streamed to the user with minimal latency; observability captures quality, timeouts, errors, and compliance events.

Architecturally, there are two primary modes:

- Real-time, interactive avatars. Low-latency two-way voice, typically under 300–700 ms end-to-end. Requires WebRTC, GPU-accelerated rendering, and careful orchestration.

- Asynchronous video avatars. Text- or script-driven video generation for training, onboarding, or content localization. Focus is on quality and scale rather than interactivity.

Build vs buy: choosing your path

The decision often comes down to time-to-value, control, and governance:

- When buying makes sense. If you need to launch quickly, your use case is well-served by a vendor’s capability, and their terms satisfy your compliance needs — SaaS APIs can compress timelines from months to weeks. Common for spokesperson-style avatars or pilots where speed outranks deep customization.

- When building makes sense. If you require strict data residency, on-prem or VPC isolation, deep character customization, integration with an existing 3D pipeline, or control over latency and costs at scale — a custom build using 3D engines and open or self-hosted models is the better long-term bet.

- Hybrid is common. Many teams buy for one layer and build the rest: for example, a self-hosted STT/LLM stack coupled with a managed avatar renderer, or an in-house Unreal character driven by a commercial TTS.

Key vendor evaluation criteria:

- Fit for modality and latency: real-time vs async, expected round-trip target

- Customization: likeness licensing, brand control, body gestures, multilingual, accessibility

- Integration: APIs/SDKs, WebRTC support, mobile/web targets, SSO and enterprise auth

- Governance: data privacy, model and content safety, audit logs, SOC 2/GDPR/HIPAA alignment

- Commercials: pricing model, usage tiers, licensing/IP terms, exportability, lock-in risk

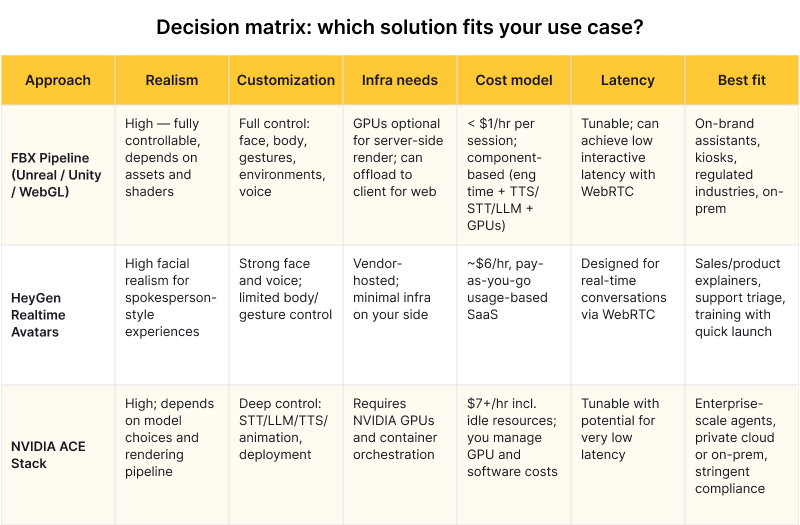

What is the difference between FBX Avatar, HeyGen, and NVIDIA ACE

Below we evaluate the three leading approaches using consistent criteria to help you find the right fit.

FBX-based pipeline (Unreal / Unity / WebGL)

An FBX character and animations are imported into an engine such as Unreal Engine or Unity, or rendered in-browser via WebGL/WebGPU using frameworks like Three.js. The avatar is created once and used as a static file that dynamically plays animations, including lip sync, emotions, and body movements.

Advantages:

- Unlimited customization. Full control over clothing, animations, voice, gestures, and environments. You can use a real person’s face for custom likeness.

- Affordable pricing. The cheapest user session among all solutions — under $1 per hour per session.

- Flexible deployment. On-prem GPU, private cloud, or edge. Ideal for strict compliance or low-latency intranet scenarios.

- Voice flexibility. Voice can be changed using any TTS service, including AWS Polly or ElevenLabs.

Disadvantages:

- Lower realism baseline. Less realistic than pre-rendered or deepfake-style avatars without significant asset and shader investment.

- Higher engineering overhead. Character creation, animation retargeting, lip sync calibration (viseme data must be tuned per FBX model), and GPU operations are all owned by your team.

- Content complexity. Complex or highly specific animations require a motion designer or modeler.

Best fit: Long-lived on-brand digital humans; regulated industries; kiosks and embedded devices; organizations with existing Unreal/Unity pipelines.

HeyGen Realtime Avatars

HeyGen provides APIs for video generation and a Realtime Avatar that streams an expressive face and voice over WebRTC. It emphasizes fast setup, broad language coverage, and hosted infrastructure so teams can prototype and iterate quickly.

Advantages:

- High realism. Delivers highly realistic facial expressions and speech with support for 175 languages and dialects.

- Personalization. Create a personalized avatar using your own face, voice, and facial expressions (licensing and consent requirements apply).

- Fast time-to-value. Simple to implement via API/SDK and WebRTC; minimal infrastructure on your side.

- Pay-as-you-go pricing. Usage-based at approximately $6/hour, but you only pay when the avatar is actively talking.

- Quick responsiveness. Designed for real-time conversations with low latency via WebRTC.

Disadvantages:

- Face-only animation. Full-body animation or complex custom gestures are not supported compared to a bespoke 3D pipeline.

- Vendor dependency. You depend on vendor infrastructure, SLAs, data handling, and regional availability. Evaluate carefully for enterprise use.

- Connectivity requirement. Requires a stable internet connection for the best experience.

Best fit: Sales explainers, product walk-throughs, inbound support triage, or internal training where a realistic spokesperson and quick launch matter most.

NVIDIA ACE (Avatar Cloud Engine)

NVIDIA ACE is a collection of SDKs and microservices for building interactive digital humans. It combines NVIDIA Riva for ASR/TTS, NVIDIA Maxine for audio/video effects, and Omniverse Audio2Face for AI-driven facial animation. It converts speech into synchronized 3D animations for AI avatars running on NVIDIA GPUs.

Advantages:

- Enhanced face animation. Delivers highly expressive facial movements and emotions; allows for realistic face creation and animation with Unreal Engine.

- Fine-grained pipeline control. GPU-accelerated control over the full speech-to-animation pipeline; integrate with Unreal, Omniverse, or custom renderers.

- Flexible private deployment. Containerized services via NVIDIA NGC, alignment with enterprise security baselines, and potential to keep sensitive data in your VPC or data center.

- On-prem advantage. Fits perfectly for companies that already have GPU-powered on-premise servers (at least Tesla T4).

Disadvantages:

- Most expensive. Single session cost on Azure cloud infrastructure is approximately $7+/hour, plus payment for idle cloud resources (VMs, volumes, NAT) even when not in use. Deployment takes 40–50 minutes.

- Engineering and infra intensive. You own container orchestration, GPU provisioning, scaling, observability, and updates across multiple services.

- Maturing ecosystem. Documentation and community are still developing; budget time for integration, benchmarking, and tuning.

- No body animation. Audio2Face does not support body animation.

Best fit: Enterprises needing deep control, on-prem or private-cloud deployment, and tight latency/quality budgets for high-traffic interactive use.

Decision matrix: which solution fits your use case?

Note: HeyGen capabilities and pricing evolve; always check the latest on their docs and pricing pages. NVIDIA ACE is a toolbox, not a turnkey product — scope engineering effort carefully.

Where AI avatars deliver value

- Customer support. Visual agents deflect repetitive Tier 1 requests, collect intent, and escalate with richer transcripts. Tightly integrated with CRMs and ticketing, they improve containment and CSAT.

- Product onboarding and sales. A spokesperson avatar walks users through setup, personalization, or complex features, reducing abandonment and driving activation.

- Training and L&D. Localize training at scale with asynchronous video avatars. For competency-based learning, real-time avatars simulate role-plays and provide coaching feedback.

- Telehealth and patient engagement. Avatars handle intake, pre-visit triage, and post-visit education while respecting privacy and accessibility requirements.

- Financial services and insurance. Guided flows for claims, KYC education, and policy explanation reduce call volumes and errors.

- Retail and kiosks. In-store or embedded assistants improve wayfinding, product discovery, and service without increasing headcount.

Enterprise guardrails: privacy, safety, and operations

Production deployments must satisfy more than a good demo:

- Data privacy and compliance. Define how audio, transcripts, and model prompts/responses are stored. Align with GDPR, SOC 2, HIPAA where applicable.

- Security and governance. Enforce SSO, RBAC, and audit trails. Validate vendor data residency options and subprocessors for SaaS solutions.

- SLAs and resilience. Target uptime, rate limits, failover, and degraded modes. Provide fallbacks to voice-only or chat if video or rendering degrades.

- Latency SLOs. Set end-to-end budgets for STT, LLM/RAG, TTS, and render. Test across regions and devices; for WebRTC, measure jitter and packet loss handling.

- Accessibility and inclusivity. Offer captions, multiple language options, adjustable speaking rates, and clear opt-in for biometric likeness usage.

- Content safety and brand guardrails. Apply prompt safeguards, tone constraints, and post-processing moderation. For RAG, restrict retrieval to vetted sources and log citations.

Implementation roadmap and TCO modeling

Start small, then harden. Begin with discovery to define target tasks, success metrics, languages, and accessibility requirements.

- Prototype. Pick one stack path and instrument latency and quality. Validate voice, lip sync, and edge cases.

- Pilot. Expand to a controlled user group with observability and human-in-the-loop review. Capture support handover flows and analytics.

- Production. Establish SLAs, CI/CD for prompts and assets, GPU and cost monitoring, and ongoing evaluation. Plan for A/B testing of voices, gestures, and dialogue strategies.

TCO components to model:

- Usage-based AI services: STT per minute, TTS per character, LLM tokens, vector DB reads/writes for RAG

- Rendering and GPUs: cloud or on-prem GPU hours, autoscaling, and egress

- Content pipeline: character creation, motion capture or animation, localization, ongoing updates

- Engineering and DevOps: orchestration services, WebRTC / media servers, observability, CI/CD, and QA

- Compliance and governance: security reviews, data retention policies, DPIAs, and audits

- Vendor licensing: SDK licenses, likeness or voice licensing, per-seat admin tools

A practical approach: estimate cost per minute of interaction across STT, LLM, TTS, and render; multiply by expected concurrency and duty cycle; then add fixed platform and team costs. Validate with load tests before scaling.

AI avatars built by Globaldev

IIf you need to explore AI avatar solutions or are looking for experts to guide you through the process, Globaldev Group can help you choose the right solution that fits your needs and budget.

Globaldev partners with product and engineering teams to ship AI avatars that work in production:

- Advisory and discovery. Use-case framing, latency and compliance requirements, build-vs-buy analysis, and vendor selection.

- Prototyping and integration. HeyGen Realtime integration, custom 3D pipelines with Unreal/Unity and FBX assets, or NVIDIA ACE deployments with Riva/Maxine/Audio2Face.

- AI and MLOps. RAG pipelines, prompt and tool orchestration, evaluation harnesses, observability, and content safety guardrails.

- GPU and platform engineering. Cloud and on-prem GPU clusters, autoscaling, media delivery, and cost optimization.

- Content pipeline. Character creation, animation, and localization workflows.

See how we supported a conversational automation platform: AI-powered virtual assistants case study.

Closing thoughts

There is no single “best” AI avatar solution — there is a best fit for your goals, risk posture, and timelines:

- Need speed and spokesperson-style interactions? A managed API like HeyGen gets you live quickly.

- Need brand-perfect control, on-prem options, or deep gestures? An FBX-based pipeline with Unreal or Unity is a strong foundation.

- Need a GPU-accelerated, fully controlled speech-to-animation stack? NVIDIA ACE provides the building blocks, with the trade-off of higher engineering investment.

AI avatars are already transforming customer service, training, telemedicine, and sales. The technology is making quick progress — and more businesses are expected to deploy production-grade avatar solutions within the next few years.

If you are exploring AI avatar development for support, training, or product onboarding, Globaldev can help you evaluate options, pilot a solution, and scale it with the right guardrails.