How AI skin analysis works in aesthetic medicine: A practical guide for clinics

Adoption is increasing because these platforms help bridge one of the biggest challenges in aesthetic medicine: aligning patient expectations with realistic treatment results. In an international survey across 13 countries, 61.3% of dermatologists reported already using AI tools in clinical workflows, particularly for image-based assessment and decision support.

A comprehensive virtual skin consultation platform with photo analysis, operating as a structured skin condition analysis app for med spas and aesthetic clinics, can evaluate multiple facial metrics within seconds. These systems typically assess:

In this article, you will learn the operational benefits of integrating AI into your practice, how objective data improves patient education, and the practical steps required to successfully deploy these analysis systems to enhance your clinical capabilities.

What AI facial analysis actually means

In a world of Instagram filters and FaceID smartphone unlocking, computer vision skin analysis explained in a medical context is a fundamentally different category of technology.

While a beauty filter simply overlays a blur to hide a blemish, medical-grade AI performs a structured computational analysis of a high-resolution image. It doesn't just look at the face; it maps, segments, and analyzes it through four distinct technical layers.

The 4 technical layers of clinical AI

Layer 1 — Facial landmark detection

This is the skeletal framework of the analysis. The AI identifies specific anchors: the corners of the eyes, the curve of the lips, the jawline, the cheekbones, and the bridge of the nose. Facial landmark detection in aesthetics is the foundation for symmetry mapping.

Without these precise coordinates, a simulation of a filler injection would be spatially inaccurate; with them, the software can estimate how volume is likely to sit against the patient's unique bone structure.

Layer 2 — Skin segmentation

The AI doesn't treat the face as a single canvas. It segments or carves the image into specific anatomical zones: the forehead, the periorbital zone (around the eyes), the nasolabial folds, the cheeks, and the chin. This allows for hyper-localized diagnostics, recognizing, for example, that while the cheeks may have high hydration, the periorbital area requires immediate intervention for fine lines.

Layer 3 — Feature extraction

This is where the detailed feature extraction occurs. Using advanced algorithms, the system performs AI pigmentation analysis in aesthetic medicine, identifying melanin clusters, pore size, redness (vascularity), and texture variations. By isolating these features from the rest of the image, the AI can quantify exactly how many wrinkles are present and how deep they sit compared to a massive database of similar skin types.

Layer 4 — Prediction modeling

The final layer is where the data becomes actionable. Here, AI skin age detection how it works comes to life. By comparing the extracted features against thousands of clinical datasets, the model estimates biological skin age and treatment suitability. It can project how a specific Botox zone will react to neurotoxins or how a filler projection will look post-procedure, moving the consultation from subjective estimation toward data-supported planning.

Beauty filters vs. medical AI facial analysis

Understanding these differences is crucial for building patient trust. Patients need to know they aren't looking at a photoshopped version of themselves, but a clinically validated projection.

By separating filters from physics-based modeling, clinics can position themselves as high-tech authorities that prioritize accuracy over vanity.

Step-by-step: How AI facial analysis works technically

Achieving facial analysis AI medical-grade accuracy requires a standardized sequence of operations, often referred to as the technical pipeline. This process ensures that the data gathered is actionable and, most importantly, repeatable across different consultation sessions.

Step 1 — Image capture: The foundation of accuracy

The largest variable in AI skin analysis accuracy for clinics is the quality of the initial photograph. To combat environmental noise, professional AI systems utilize:

- Lighting normalization: Software corrects for warm or cool office lighting to reveal true skin tone (ITA° index) and vascularity.

- Pose correction: The AI detects if a patient’s head is tilted or rotated, prompting a re-take or digitally normalizing the alignment to ensure landmarks are not distorted.

- Resolution requirements: Medical-grade tools require high-fidelity capture to distinguish between a fine line and a deep wrinkle.

Step 2 — Facial landmark detection: Mapping the blueprint

Once a clean image is secured, the AI maps the facial geometry. Using models like Google MediaPipe or custom CNNs, the system identifies up to 468 distinct landmarks.

- Primary detectors: Eyes, jawline, lips, nose bridge, and cheekbones.

- Clinical utility: These points act as digital anchors. If you are planning a cheek filler, the AI uses these landmarks for symmetry scoring and to ensure that treatment simulations are anchored to the patient's actual bone structure, not just a floating image layer.

Step 3 — Skin segmentation: Regional diagnosis

The AI does not analyze the face as a single unit. It uses architectures like U-Net (an encoder-decoder network) to segment the face into regions of interest (ROI).

- Zone-based detection: It separates the T-zone, periorbital area, and neck.

- Precision: This allows for targeted treatment planning. For instance, it can ignore facial hair while focusing exclusively on the active skin surface for a chemical peel assessment.

Step 4 — Feature detection: How AI detects wrinkles and pores

This is the extraction phase. The AI uses multi-spectral analysis and convolutional neural networks to find:

- Wrinkles: By identifying shadow-depth patterns and line density.

- Pores: By calculating the count and diameter of follicle openings.

- Vascularity & pigment: By isolating specific color wavelengths (redness for rosacea, brown for UV damage) that may be invisible under standard room lighting.

Step 5 — Prediction models: Clinical decision support

The final stage translates raw pixels into professional insights. The AI compares the patient's data against massive datasets to provide:

- Skin age estimates: How the skin compares to the chronological average.

- Botox & filler zones: Identifying dynamic vs static wrinkles to suggest optimal injection sites.

- Treatment suitability: Determining if a patient’s skin barrier is resilient enough for aggressive laser resurfacing.

By following this approach, clinics can provide patients with a facial analysis that feels like a medical diagnostic rather than a novelty app. This objective workflow is exactly how modern clinics build the high-trust environment necessary for complex aesthetic procedures.

How AI treatment simulation works

One of the most powerful conversion tools in a modern clinic is the ability to bridge the expectation gap. When a patient asks, "Will I still look like myself?", they are seeking reassurance that only a visual can provide. This is the domain of AI treatment simulation for aesthetic clinics, a sophisticated modeling process that transforms a static photo into a dynamic, predictive roadmap.

Contemporary AI filler result visualization tools and AI Botox simulation software for clinics use anatomical physics to predict how tissues will move, stretch, and settle.

The layers of a clinical simulation

To generate a before and after AI simulation of cosmetic procedures, the software processes the image through four specialized layers:

- Landmark anchoring: Before any movement occurs, the AI locks onto the 468+ landmarks discussed in Section 3. These anchors ensure that the predicted changes, such as a brow lift or jawline sharpening, stay anatomically tethered to the patient's bone structure. This prevents the drifting effect seen in low-quality apps.

- Volume projection: This layer is essential for fillers. The AI simulates the physical displacement of skin based on volumetric units. It doesn't just lighten a shadow; it estimates how 1cc of hyaluronic acid may affect mid-face volume projection (the cheek area) and how that lift affects the nasolabial fold below it.

- Muscle relaxation modeling: For neurotoxins like Botox, the AI predicts the reduction of dynamic movement. By analyzing the intensity of contraction in a patient's scowl or forehead, the software can render a softened state that mimics the effect of muscle relaxation on the overlying skin.

- 3D morphing & rendering: Finally, the system uses 3D morphing algorithms to generate a high-fidelity visual outcome. This allows the patient to see their face from multiple angles, providing a realistic expectation of profile changes, such as a non-surgical rhinoplasty or chin augmentation.

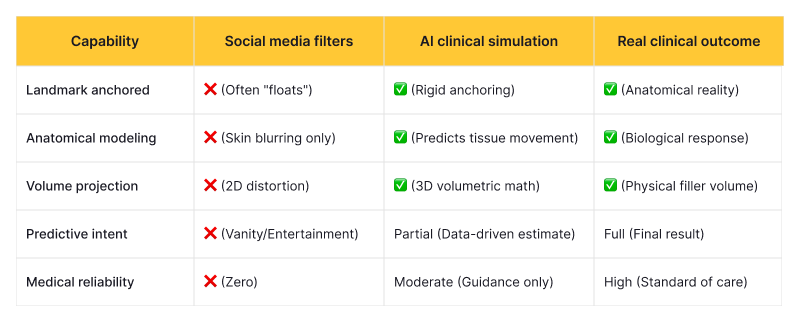

Simulation vs. filters vs. real outcomes

It is critical for practitioners to explain that while AI is predictive, it is not a guarantee. Comparing clinical AI to social media filters helps patients understand the medical intent behind the technology.

By using an AI filler result visualization tool, clinicians can shift the consultation from a verbal trust me to a collaborative let's look at the data. This transparency not only increases procedure uptake but significantly reduces post-treatment dissatisfaction by ensuring expectations are grounded in anatomical reality.

How accurate AI skin analysis really is

For any clinic, the transition to high-tech consultations hinges on a single question: “Can I trust the data?” Clinical evaluation-stage readers need more than marketing promises; they require empirical evidence of facial analysis AI medical-grade accuracy.

The reality of 2026 is that AI is no longer just an experimental assistant. In many metrics, it has reached, and occasionally exceeded, the consistency of human experts. However, understanding the nuance of these statistics is vital for maintaining AI skin analysis software healthcare compliance and patient safety.

The benchmarks of modern accuracy

Current research indicates that dermatology AI sensitivity commonly ranges from 90–98% across various classification tasks, such as detecting sub-clinical sun damage or early-stage fine lines. For clinics, this means fewer missed opportunities for preventative care and a much higher baseline for standardized assessments.

The Balancing Statement: Despite these high scores, accuracy is not a plug-and-play guarantee. AI performance can drop significantly, sometimes by as much as 27–40%, on diverse real-world datasets if the model was not trained on a representative range of ethnicities and skin types. A model that is 98% accurate on Fitzpatrick Type II skin may struggle with Type VI without proper dataset diversity.

By focusing on these factors, clinics can ensure that their AI skin analysis accuracy isn't just a number on a brochure, but a reliable foundation for every treatment plan they prescribe. Objective data builds trust, and trust is the ultimate driver of patient retention in aesthetic medicine.

Where off-the-shelf AI tools fall short

As the market for digital dermatology matures, many clinics are finding that the "plug-and-play" convenience of general SaaS (Software as a Service) platforms comes with a hidden ceiling. While these tools offer a low barrier to entry, they often lack the technical depth and operational flexibility required by high-volume, premium medical practices.

Bridging the gap between informational curiosity and a clinical solution requires an honest look at off-the-shelf AI skin analysis tools' limitations. For a clinic scaling its operations, a generic app can quickly transition from an asset to a bottleneck.

The limitations of one-size-fits-all AI

- Generic datasets & the diversity gap: Most off-the-shelf tools are trained on publicly available datasets that frequently skew toward specific demographics. This leads to a critical drop in accuracy for underrepresented skin tones.

- The reality check: Research indicates that performance drops on diverse real-world datasets when proper dataset tuning is absent. For a global clinic, this inaccuracy is a significant liability.

- Limited treatment logic tuning: A generic app might suggest hydration for a dry skin reading. However, a specialized clinic may want the AI to suggest a specific proprietary chemical peel or a branded injectable protocol. Off-the-shelf tools rarely allow you to teach the AI your specific clinical philosophy.

- Fragmented workflow integration: Clinical efficiency relies on a single source of truth. Many SaaS tools operate as silos, requiring staff to manually transfer photos or data into the clinic's primary EMR (Electronic Medical Record). This double-handling increases the margin for administrative error.

- Compliance rigidity: While many apps claim to be secure, they often lack the granular controls, such as specific audit logs or configurable data-retention policies, required for enterprise-level AI skin analysis software healthcare compliance.

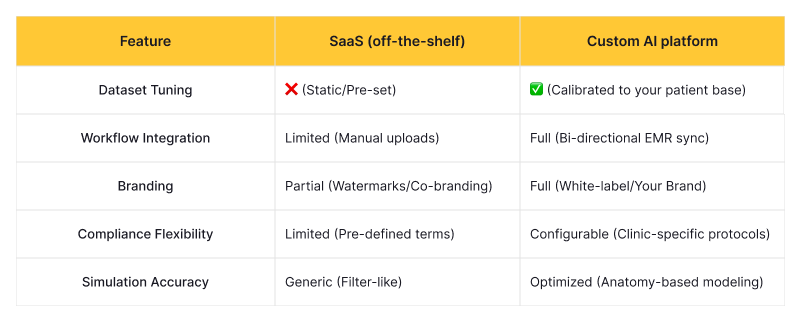

Custom vs. SaaS facial analysis platforms

When evaluating white-label AI skin analysis vs. custom development, clinics must weigh immediate speed against long-term clinical utility.

While SaaS tools are excellent for pilot programs, moving toward custom vs. off-the-shelf AI aesthetic software is becoming the standard for clinics that view AI not as a gimmick, but as a core pillar of their diagnostic excellence. In the next section, we will explore why this shift toward customization is the key to unlocking true clinical ROI.

Why clinics move toward custom AI consultation platforms

As aesthetic practices scale, the standardized nature of off-the-shelf software often becomes a friction point. Premium clinics are increasingly moving toward custom AI facial analysis software for clinics, not merely for the sake of innovation, but to solve specific operational and clinical gaps that generic SaaS tools cannot bridge.

The transition to build custom AI consultation software for dermatology represents a strategic shift from using AI as a gimmick to utilizing it as a core diagnostic and revenue-driving engine. By tailoring the AI to a clinic's unique philosophy, practitioners can automate the information-gathering phase of a consultation while maintaining the high-touch personalization patients expect.

The five layers of AI customization

When evaluating custom AI facial analysis vs. SaaS aesthetics platforms, the value lies in these five proprietary layers:

- Dataset tuning & relevance: Generic tools are trained on broad, public datasets. Custom platforms allow you to fine-tune models on your own patient data (with proper consent), ensuring the AI understands the specific skin types, age ranges, and aesthetic concerns prevalent in your local demographic. This leads to higher diagnostic relevance and fewer false positives in skin readings.

- Treatment mapping & logic: This is the brain of the platform. Instead of a generic recommendation, a custom system maps the analysis directly to your clinic's service menu. If the AI detects mid-face volume loss, it can automatically suggest your specific "Liquid Facelift" protocol or a combination therapy of Radiesse and Ultherapy, based on your clinical preferences.

- Seamless EMR/CRM integration: A custom build eliminates the silo effect. The AI analysis, photos, and recommended treatment plans are pushed directly into the patient's record in your EMR. This creates a unified workflow where the front desk, the practitioner, and the follow-up marketing team are all looking at the same data.

- Device & hardware calibration: Off-the-shelf apps must work on every smartphone camera, often compromising accuracy for compatibility. A custom solution can be calibrated for the specific professional cameras or 3D scanners your clinic uses, maximizing facial analysis AI medical-grade accuracy.

- Branding & patient trust: A white-labeled platform ensures the patient stays within your brand's ecosystem. Using a bespoke tool with your clinic’s logo and UI design reinforces a premium positioning strategy, signaling to the patient that they are receiving a signature experience unique to your practice.

By investing in a custom AI consultation platform, clinics move beyond the limitations of generic software. They gain a digital assistant that speaks their language, follows their protocols, and scales their expertise—allowing the practitioner to focus on the art of the procedure while the AI handles the science of the assessment.

Compliance requirements for AI facial analysis in clinics

As AI moves from a novelty to a core clinical tool, it enters a high-stakes regulatory environment. For medical directors and stakeholders, the primary concern isn't just "Does it work?" but "Is it legal?" Handling high-resolution patient imagery, often the most sensitive form of Protected Health Information (PHI), requires more than just a password.

Achieving true AI skin analysis software healthcare compliance requires a multi-layered approach to data governance that spans across international borders and regional mandates.

Navigating the regulatory landscape

HIPAA & GDPR: The global gold standards

In the United States, HIPAA-compliant AI facial analysis software should adhere to the Security Rule, ensuring that any device capturing or storing facial data uses end-to-end encryption. In Europe, the GDPR (General Data Protection Regulation) goes a step further by classifying biometric data, which includes facial landmarks, as a special category of data requiring explicit, informed consent and the right to be forgotten.

PHI storage & encryption

Images used for AI analysis are not just photos; they are medical records. To prevent data breaches, information must be encrypted both at rest (on the server) and in transit (while being sent from the camera to the AI model). Custom platforms allow clinics to choose where this data lives, whether on-premise or in a secure medical cloud, ensuring the clinic maintains total sovereignty over its PHI.

Audit logs & traceability

A compliant system must maintain a detailed digital trail. Audit logs should record every time a patient's file is accessed, who accessed it, and what changes (if any) were made to the analysis. This is essential for both internal quality control and external legal protection in the event of a dispute.

Consent tracking & model transparency

Modern compliance also involves "Explainable AI." Clinicians must be able to explain how the AI reached its conclusion to avoid black box liability. Furthermore, consent tracking must be integrated directly into the software workflow, ensuring that patients have signed off on the use of their data specifically for AI processing before any analysis begins.

By prioritizing these compliance layers, clinics do more than just avoid fines; they build an enterprise-level reputation for data integrity. In an era where digital privacy is a top patient concern, showing that your HIPAA-compliant AI facial analysis software takes security seriously is a powerful way to differentiate your practice from less rigorous competitors.

How clinics can develop their own AI facial analysis software

For enterprise-level med-spas and multi-location dermatology groups, the conversation eventually shifts from "What can I buy?" to "How can I build?" Understanding how to develop AI facial analysis software for a clinic is no longer a task reserved for tech giants; it is a structured engineering process that aesthetic businesses can navigate through specialized partnerships.

The journey of AI software development for aesthetic medicine is a pipeline that moves from raw data to a refined clinical tool. Whether you choose to build in-house or outsource AI facial recognition for a cosmetic clinic, the following phases are non-negotiable for a successful launch.

The development pipeline

- Dataset selection & curation: The intelligence of your AI is only as good as the images it learns from. This phase involves gathering thousands of high-quality, standardized clinical photos. Crucially, this must include a diverse range of Fitzpatrick skin types to ensure your AI consultation software development for a med spa is inclusive and accurate for all patients.

- Data annotation: Raw images must be labeled. Human experts (usually dermatologists or trained aestheticians) manually identify wrinkles, pigment spots, and landmarks in the training photos. This ground truth data teaches the AI exactly what to look for.

- Model training: Engineers use frameworks like TensorFlow or PyTorch to train neural networks. During this phase, the AI learns to recognize patterns between the annotated features and the raw pixels.

- Validation & clinical testing: Before deployment, the software is tested against a hold-out set of images it has never seen before. Its results are compared to human expert assessments to calculate its medical-grade accuracy scores.

- Deployment & integration: The final step is wrapping the AI in a user-friendly interface and integrating it with your existing EMR or CRM systems.

Choosing your strategy: Build vs. buy vs. hybrid

Deciding how to approach development depends on your clinic's budget, timeline, and need for proprietary features.

While a custom build requires a higher upfront investment, it grants the clinic full ownership of its "Digital Brain." By owning the software, you eliminate per-user licensing fees and ensure that your AI consultation software development perfectly mirrors your signature clinical protocols.

Future of AI facial analysis in aesthetic medicine

We are currently standing at the baseline of what artificial intelligence can achieve in the clinical setting. While today’s tools focus on static diagnosis and simple overlays, the next three to five years will see a shift toward proactive, predictive, and multi-dimensional analysis. For AI facial analysis for aesthetic clinics, the roadmap moves from simply detecting to forecasting.

As technology evolves, the virtual skin consultation platform with photo analysis will transform from a digital mirror into a sophisticated clinical simulation lab.

Exploring next-generation capabilities

- Real-time simulation (Live Video): Future platforms will move beyond static photos to live video-based simulations. As a patient speaks or smiles, the AI will show how Botox or filler will affect dynamic movement in real-time, providing a far more realistic expectation of post-procedure naturalness.

- 3D volumetric prediction: While current 2D-to-3D mapping is impressive, the future lies in true volumetric forecasting. AI will be able to predict exactly how many milliliters of product are required to achieve a specific lifting effect, down to the microliter, based on the patient's unique tissue density and elasticity.

- Longitudinal skin tracking: This is perhaps the most significant shift for patient retention. Clinics will be able to use "Time-Machine" AI to track a patient’s skin health over decades. By comparing scans from five years ago to today, the AI can quantify the rate of aging and prove the efficacy of long-term maintenance protocols.

- Treatment optimization loops: AI will eventually act as a global clinical researcher. By analyzing thousands of successful (and unsuccessful) outcomes across a clinic network, the AI can suggest the optimal treatment path for a specific patient profile, essentially crowdsourcing clinical excellence to improve individual results.

The future of aesthetic medicine is one where the clinician’s artistic eye is perfectly balanced by the AI’s mathematical precision. By adopting these forward-looking technologies early, clinics can move beyond the standard of care and define a new standard of excellence.

What clinics should take away before adopting AI facial analysis

Adopting artificial intelligence is a pivotal step in modernizing an aesthetic practice. To fully leverage this technology, practitioners must understand the foundational mechanics of how AI facial analysis works. It is a sophisticated multi-stage workflow that begins with precise landmark detection to anchor the face, followed by segmentation to isolate specific skin zones. From there, the system moves into feature detection to quantify concerns like UV damage and fine lines, ultimately utilizing prediction modeling and simulation support to provide a data-driven roadmap for patient care.

However, a successful implementation requires grounded expectations. While AI skin analysis accuracy for clinics has reached impressive benchmarks—often rivaling expert dermatologists in diagnostic consistency—it remains a decision-support tool rather than a replacement for clinical judgment. The most effective AI facial analysis for aesthetic clinics acts as a digital co-pilot, providing objective data that reinforces the practitioner’s expertise and standardizes the consultation experience across the entire staff.

For many practices, the choice between a standard app and custom AI facial analysis software for clinics depends on long-term goals. While off-the-shelf tools provide a quick entry point, custom platforms offer superior workflow integration, proprietary dataset tuning for diverse skin types, and higher simulation accuracy. For AI consultation software in dermatology clinics, the ability to maintain compliance flexibility and brand consistency is often what transforms a software tool into a core business asset.

The integration of AI into aesthetic medicine is no longer a futuristic concept: it is a competitive necessity for the modern clinic. By mastering the technical layers of how AI facial analysis works and prioritizing AI skin analysis accuracy, practitioners can transform subjective consultations into objective, data-driven experiences.

Whether you are looking to enhance patient trust through high-fidelity simulations or streamline your operations with custom AI facial analysis software for clinics, the right platform serves as a powerful bridge between clinical expertise and patient expectations.

Don't let your practice rely on guesswork; embrace the precision of AI consultation software for dermatology to standardize your results, scale your expertise, and lead the next generation of aesthetic excellence.