An AI readiness checklist for your company before you invest

Today, companies are actively investing in AI, and many are wasting significant budgets in the process. The core issue is not the technology itself, but the lack of AI readiness before implementation.

So what does AI readiness actually mean?

- Clear business goals tied to measurable outcomes

- Data readiness and accessibility

- Internal capability to execute

- Realistic ROI expectations

This article provides a complete AI readiness checklist, real-world examples, and a practical decision framework to help you invest in AI with confidence.

AI readiness checklist (short version before the deep dive)

Before investing in AI, it’s important to evaluate whether your company is actually prepared to implement it in a way that delivers value. Most AI projects fail not because of model quality, but because businesses skip this evaluation phase.

This checklist helps you determine whether you are ready for AI — technically, strategically, and economically.

1. Business clarity

Start with the problem, not the technology:

- Do you have a clearly defined business problem AI should solve?

- Can this problem be tied to a measurable metric (cost, revenue, time, efficiency)?

Important: If you cannot define the problem clearly, AI will not create predictable value.

2. Use case definition

Define exactly how AI fits into your product or business:

- What value do you expect AI to bring, and where exactly?

- Will it improve internal operations or user experience?

- Will it be a supporting feature or a core capability of your product?

Important: This step determines the scope, cost, and complexity of the entire AI initiative.

3. Data readiness

AI systems are only as strong as the data behind them:

- Is your data structured?

- Is it accessible across systems?

- Is it clean, consistent, and usable for modeling or LLM integration?

Important: Poor data quality is one of the main reasons AI projects fail.

4. ROI expectation

AI should be evaluated as an investment, not an experiment without limits:

- Can you estimate implementation cost?

- Can you estimate ongoing costs (tokens, infrastructure, maintenance)?

- Can you define expected business return or efficiency gain?

Important: If ROI cannot be estimated, scaling AI becomes risky.

5. Technical foundation

Check whether your system can actually support AI integration:

- Do you have basic infrastructure in place?

- Are APIs and integrations available?

- Can you build and test a minimum viable product (MVP)?

Important: Without this foundation, even simple AI use cases become expensive.

6. Internal capability

AI adoption requires ownership, not just interest:

- Is there clear product ownership for AI initiatives?

- Do you have engineering capacity to implement and maintain solutions?

- Are decision-makers involved in defining scope and priorities?

Important: Without internal ownership, AI projects lose direction quickly.

7. Execution approach

Define how you will approach AI adoption:

- Are you starting with an experiment (MVP)?

- Or are you committing to a full-scale strategy from the beginning?

Important: Most companies should start with experimentation before scaling.

8. Architecture flexibility

Avoid locking yourself into rigid systems early:

- Can you switch between AI models if needed?

- Is your architecture flexible enough to avoid vendor lock-in?

Important: Flexibility is critical in a rapidly evolving AI ecosystem.

If you answered “no” to several sections, your company is likely not ready to scale AI yet. In most cases, the right next step is not implementation, but validation, data preparation, or working with AI consulting for business to define the right use cases first.

Why do most companies get AI wrong?

AI expertise is widely available today, and many companies position themselves as AI experts in a rapidly growing market. But what actually distinguishes effective AI consulting for mid market companies and startups from hype-driven approaches is not knowledge — it is execution and measurable outcomes.

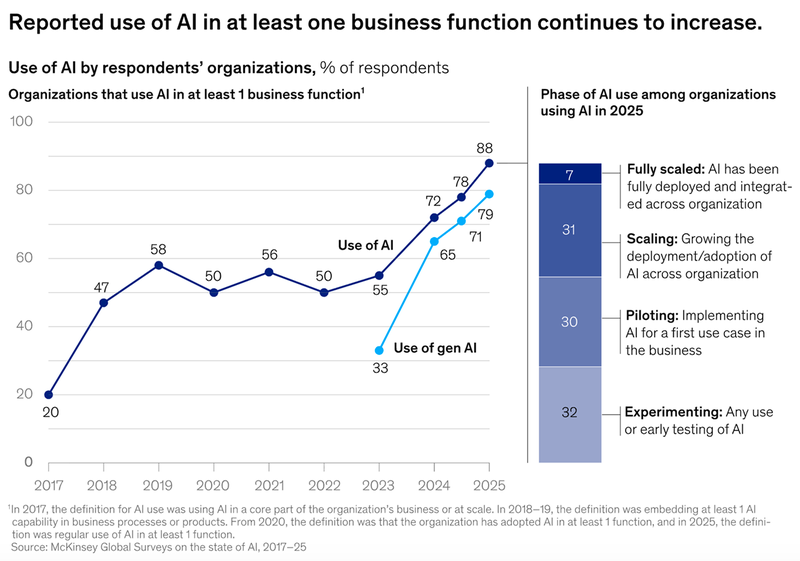

According to McKinsey research, although AI adoption is increasing rapidly, most organizations are still in the early stages of maturity. While 88% of companies report using AI in at least one business function, only about one-third have moved beyond experimentation into scaling AI across the enterprise.

Source: McKinsey & Company

Companies often make the same avoidable mistakes when deciding where and how to apply AI.

- Product trap “AI-everything”. From a product perspective, adding AI everywhere may seem valuable, but it often leads to unnecessary complexity and higher costs. In the example above, a lightweight model can be more effective than a large-scale LLM.

- Skipping data readiness. Whenever you want to implement custom knowledge, spend more time preparing data, interconnections, and dependencies than on development itself. An 80/20 ratio in favor of data preparation is often realistic.

- Single vendor dependency. Avoid locking your product into one AI provider. Introduce an abstraction layer in your architecture to maintain flexibility.

Real AI failure cases (and what they teach us)

1. Zillow Offers: AI without real-world validation

Zillow used AI models to predict home prices and started buying houses at scale. The models couldn’t accurately handle real-world market volatility. Pricing errors accumulated quickly, leading to massive losses. Zillow shut down the entire division after losing over $500 million.

What this teaches us: AI models that work in theory can fail in dynamic, real-world environments if they are not continuously validated and constrained.

2. IBM Watson Health: Overpromising without usable data

A well-known example is IBM Watson Health, which was positioned as an AI system to transform healthcare decision-making. In reality, it struggled due to inconsistent and unstructured data, as well as difficulties integrating into real hospital workflows. As a result, some recommendations were unreliable, limiting real-world adoption.

IBM eventually scaled back the initiative and sold parts of Watson Health — highlighting a key lesson: even advanced AI fails without proper data and practical implementation.

What this teaches: Even advanced AI fails without high-quality, structured, and integrated data.

3. Amazon AI recruiting tool: Bias from bad training data

Another example is Amazon, which developed an AI system to screen job candidates. The model was trained on historical hiring data, but that data reflected existing biases, leading the system to penalize resumes from women.

The project was eventually abandoned. This case highlights a critical risk: AI systems inherit the biases and limitations of their data, and without proper validation, they can create serious business and ethical issues.

What this teaches: This case proves that AI is not objective by default — it reflects historical data, including its flaws, unless actively corrected.

What does AI consulting mean?

An AI consulting company answers a simple question: how to apply AI in your business — and why it should be done.

Then the solution is designed and implemented. The result is a working, outcome-driven system, not just an idea.

A strong generative AI consulting company typically:

- connects business goals with AI capabilities

- validates ideas before building

- focuses on ROI instead of trends

This is especially important for AI consulting for startups and midsize AI companies, where every investment decision matters.

When does AI make sense?

You typically need AI when:

- you want to improve operations

- you want to improve customer experience

- you are unsure whether AI should be a feature or a core capability

Example 1: Should you even add AI?

You are the owner of the Crowdsourced Parking Availability App. The System allows users to share the place they free up. You have ~10,000 active users, ~2,000 parking events/day. It’s in development for 2 years (1 - active, 1 - support). And there is a friction: does it make sense to add AI into? We start from 4 simple questions:

- Would you like AI to enhance the operations?

- Would you like AI to enhance the user experience?

- Would you like AI to be a stand-alone feature?

- Would you like AI to become a core feature of the product?

These questions define the scope, cost, and depth of AI investment.

Example 2: AI improving operations

The problem: Your managers spend lots of time on client support. Actually they manually handle complaints, when the place is taken. You as the CEO want them to decrease the routine tasks and to spend more time working with new prospects.

As an AI consultancy we build the process of how AI can change the operations. And it can - drastically. Our implementation: AI automatically classifies disputes, adds the cross-reference with timestamps and other user reports, issues automated responses. Only edge cases are escalated to the Support team.

This is a high-ROI use case because it directly reduces cost and saves time.

Example 3: When NOT to overbuild AI

On the other hand, if you just start the journey, the process changes. We will ask you:

- Would you like AI to be a stand-alone feature?

- Would you like AI to become a core feature of the product?

- Less questions, but both are more complicated and complex.

Say, your choice is a stand-alone feature: AI will analyse the frequency of appearing new free lots in a certain location and share with the user the timeframe when the place becomes free on average. In this case we will become the advocates of not using AI for a core prediction engine. Backend can easily store GPS coordinates, timestamp, estimated departure time. The lightweight LLM gets the minimal role for parsing fuzzy location descriptions or smart notifications (see the implementation below).

This is the difference between effective AI adoption and unnecessary complexity.

Example 4: ROI-driven AI adoption

AI, like every feature, can either boost or burden your app. And mainly it relies on the ROI of AI adoption. We advise not only the roadmap but also the margin model. And the first simple advice: don’t build and teach the custom model, because the money you spent will be lost tomorrow, when some huge player makes it a common thing.

Many early AI startups built their products primarily around prompt engineering as a core differentiator. As foundation models rapidly improved and platforms like OpenAI and Anthropic began integrating similar capabilities directly, many of these “wrapper-style” products lost their competitive edge or were forced to pivot.

For the Parking Mobile app the Artificial Intelligence is nice:

- Parsing fuzzy location descriptions: If a user types "the spot near the pharmacy on Main Street" instead of dropping a pin, an LLM can resolve that to coordinates.

- Smart notifications: Generating context-aware messages: "A spot just opened 200 meters from your destination."

Pure ROI comparison:

- A lightweight model compared to LLM-based prediction logic will take 3 times less initial development costs, 5 times less monthly maintenance costs, and 10 times less tokens costs.

- If we dive deep, we’ll even see that the only feature worth is Parsing fuzzy location descriptions. It handles natural language input gracefully, it has fewer failed lookups. It has moderate cost and brings real UX improvement.

Example 5: Strategy vs experimentation

There is the actual difference between these two approaches. AI strategy works for the mid market business. While experimentation is more genuine for startups. When our AI consultant works on the strategy he defines the development roadmap, product metrics and a timeline.

For the experimentation we choose the MVP approach where you spend the least amount of money to get the quickest outcome. Experiments always have hard deadlines to adopt the changing AI environment asap. Even the failed experiment brings the real value, eliminating the possible money lost for the big solution.

Please see the examples of our attitude towards the strategy-experimentation:

- We always advise to build the architecture in the manner where you can easily switch from Gemini to Claude, to Grok, to Chat-GPT. It allows you to consider the price of tokens and new models capabilities. Such an architecture determines your product’s ability to adapt to market changes.

- We build the foundation to eliminate the cases when the model is exhausted and returns 429 responses. That’s where you can experiment with the bunch of models, choosing the ones with lower latency, better performance, higher quality.

Why data readiness decides everything. Important block

Data readiness is a critical part of any AI readiness assessment for business, and in many cases it is the deciding factor between success and failure.

It includes:

- data quality and consistency

- data structure and standardization

- system-level connections between sources

- accessibility and usability for AI systems

Most AI projects fail not because of model limitations, but because the underlying data is incomplete, fragmented, or not properly prepared for use.

In fact, Gartner estimates that up to 85% of AI projects fail due to poor data quality or lack of relevant data.

AI readiness checklist for business (full version before you act)

Before you invest in AI or work with an AI consulting company, use this checklist as a go/no-go decision tool to validate your AI readiness before committing budget:

- Do you clearly define the business problem AI will solve?

- Do you understand whether AI is a feature or a core capability?

- Is your data structured, accessible, and usable?

- Can you estimate ROI — not just costs?

- Do you have internal ownership and execution capability?

- Are you starting with an MVP instead of a full-scale rollout?

- Is your architecture flexible and vendor-independent?

- Do you actually need AI — or is there a simpler solution?

This is the most practical answer to what is AI readiness assessment — a decision-making tool, not just a theoretical exercise.

Final: What do you do next?

If the direction is unclear, it’s often worth validating your approach with an experienced AI consulting company before committing to development. At Globaldev, we provide free initial consultations to help businesses clarify use cases, assess feasibility, and define the right AI strategy before investment.

If your AI readiness checklist for business is strong, get in touch with us to move forward with an MVP.

And remember: AI, like any feature, can either strengthen or complicate your product. The outcome depends on the ROI of your AI adoption. The goal is simple: Use AI where it creates measurable value — and avoid it where it does not.