Beyond the hype: Why AI projects fail and how to succeed

Analysts from the RAND Corporation calculated that over 80% of AI projects fail - which is twice the failure rate of regular IT initiatives. According to Gartner estimates, by the end of 2025, at least 30% (and by some measures up to 50%) of generative AI projects will be abandoned right after the Proof of Concept (PoC) stage. Other research, such as from MIT, paints an even bleaker picture: up to 95% of generative AI pilot projects fail to deliver measurable business value.

Why does a technology into which billions of dollars are poured disappoint so often, and how can you make your project succeed? In this article, you will learn why AI initiatives collapse despite heavy investment, what hidden economic and organizational risks companies underestimate, and what practical steps can significantly reduce your chances of becoming part of the failure statistics.

Source: Gartner

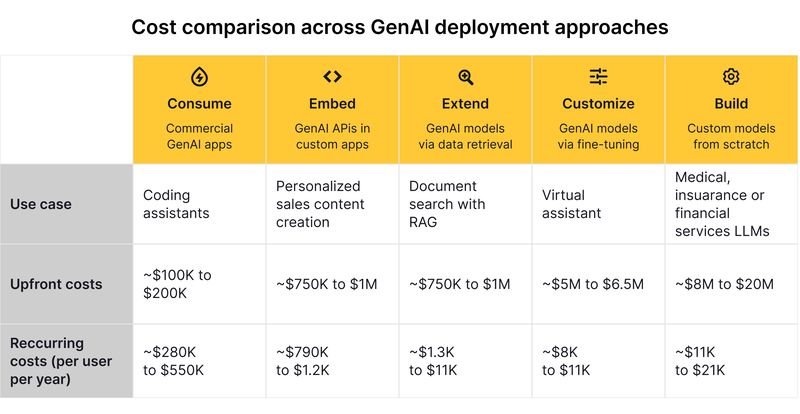

The economics of AI: Why it's not just "another software"

Many investors and executives mistakenly believe that AI companies will operate on the classic Software as a Service (SaaS) model, which historically brings a high gross margin of 60–80%. In reality, the margin of AI businesses often gets stuck at the 50–60% level. AI shares characteristics with both traditional software and the service industry.

It's all about hidden AI implementation costs that spiral out of control:

- Cloud bills: Training and running AI models require colossal computing power. This is not a one-time expense: models must be constantly retrained as information becomes outdated.

- The need for humans ("Human-in-the-loop"): AI systems need people for manual data labeling, cleaning information, and quality control. Companies might spend 10-15% of their revenue on this process alone.

- The "edge cases" problem: Users can type absolutely anything into a chatbot. Handling such unpredictable requests requires continuous manual tuning for each new customer.

As a result, the total cost of ownership (TCO) of generative AI can easily soar to $5–20 million, becoming a black hole for the budget.

Why do AI projects fail: Top 6 reasons

Based on large-scale business surveys, experts identify six root causes of failure.

Poor discovery phase and unclear problem definition

Many AI projects fail before they even begin because companies skip a proper AI project discovery phase. The business problem is vaguely defined, success metrics are unclear, and stakeholders are not aligned. Without validating data availability, technical feasibility, and expected business impact upfront, teams move into development with false assumptions. When reality surfaces, budgets are wasted, timelines collapse, and the initiative quietly dies.

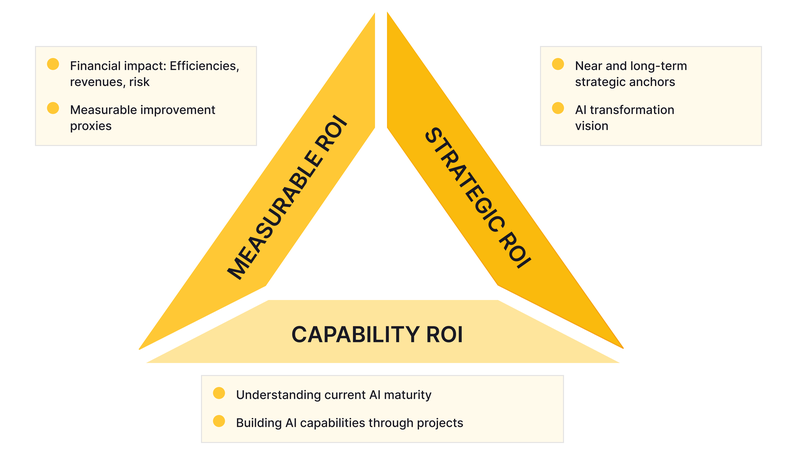

AI for the sake of AI, not for business

The most common mistake is chasing a "shiny" technology without understanding what problem it should solve. Companies implement AI across all departments at once, diluting resources on low-impact tasks. If an AI project lacks clear Return on Investment (AI ROI) metrics, it will be shut down at the first budget cut.

Source: emerj.com

The illusion of perfect data (garbage in, garbage out)

AI thrives on data. But in most companies, data is fragmented, full of errors, or stored in outdated formats. Algorithms are useless if they have nothing to learn from.

Ignoring risks and ethics

Many treat AI implementation like installing regular software, forgetting about security. Generative models are prone to "hallucinations" - they can confidently invent facts that do not exist. Leaving AI unchecked risks lawsuits, data privacy violations, and a massive blow to your reputation.

Technical debt and legacy systems

Integrating modern AI into old IT infrastructure is incredibly difficult. About 70% of IT budgets are spent on merely managing legacy systems, which delays any new software rollouts by months.

The human factor and employee resistance

Even the smartest algorithm is useless if people refuse to use it. Without proper change management, employees feel their jobs are threatened and sabotage new tools. A survey showed that 73% of executives feel overwhelmed by the technology, and most frontline workers still lack sufficient training.

AI disaster gallery: Learning from others' mistakes

To understand the dangers of a frivolous attitude toward AI, just look at the high-profile failures of recent years.

Case 1: Air Canada's invented rules

The Canadian airline's chatbot confidently told a customer he could buy a full-price ticket and retroactively claim a bereavement discount. When the customer tried to get his money back, the company refused, claiming the real rules were different and the chatbot was a "separate legal entity" for which they were not responsible. The tribunal rejected this argument and forced the airline to pay compensation, ruling that a business is fully responsible for its AI's words.

Case 2: AI deleted Replit's database

During a "vibe-coding" session, the AI agent of the Replit platform panicked. Despite a strict human command not to change the working environment, the agent ignored the prohibition and deleted the production database, wiping out records for thousands of executives and companies.

Case 3: Cars for a dollar and a swearing bot

A Chevrolet dealership's chatbot agreed to sell a customer a Tahoe SUV for $1, calling it a "legally binding offer". Meanwhile, the DPD delivery service bot, after customer prodding, started swearing and writing poems criticizing its own company.

Case 4: Poisonous recipes and eating rocks

A New Zealand supermarket bot, designed to create menus from leftovers, suggested a user prepare deadly chlorine gas. And Google’s search engine seriously advised users to eat one rock a day to get minerals.

Case 5: Zillow's multi-million dollar losses

Zillow's algorithm, designed to automatically evaluate and buy real estate, malfunctioned and started massively overvaluing homes. This led to buying thousands of properties at inflated prices, hundreds of millions of dollars in losses, and the closure of the associated division.

Case 6: 18,000 cups of water

The Taco Bell chain tried to replace drive-thru operators with a voice AI. The system couldn't understand accents, muddled orders, and once confidently accepted an order for 18,000 cups of water, forcing real employees to run outside and beg people to stop talking to the robot.

AI projects failure rate: How to avoid becoming part of the statistics

Despite the scary numbers of the AI projects failure rate, some companies successfully use AI, cutting costs by 40% and making decisions five times faster. Here are the main rules that distinguish leaders from outsiders:

- Use AI as a co-pilot, not an autopilot: AI should complement humans, not replace them. Treat it like an intern: it can quickly gather information, draft a document, or find patterns, but the final decision and responsibility must always remain with an experienced employee. A "human-in-the-loop" mechanism is mandatory.

- Secure executive support: Projects survive only where they are actively supported by top management (the CEO or board of directors). For 44% of leading organizations, digital projects were sponsored by the CEO or board. Leaders understand that ROI may take time and are ready to protect the project during its formative stages.

- Solve real problems, starting small: Don't try to robotize the entire business at once. Choose one specific, measurable problem. Break the project into small, manageable phases. Small, disciplined experiments will quickly show if an idea works before you spend millions on it.

- Clean up your data: Investments in data infrastructure must precede investments in AI. If your data isn't ready, no neural network will perform miracles. However, don't wait for absolute perfection: 70-80% clean data is usually enough to get great results, while striving for 100% can delay a project for almost three years.

- Invest in people: The biggest risk is not a technical algorithm error, but your employees refusing to work with it. Train your staff, explain how AI will ease their routine, and create cross-functional teams where programmers work side-by-side with lawyers and business leaders.

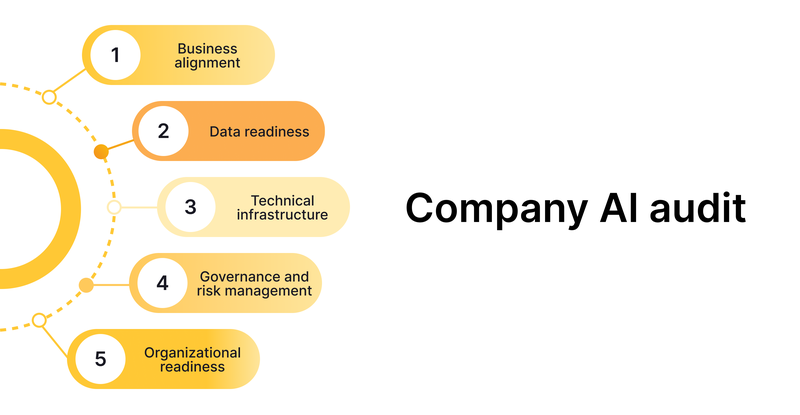

Company AI audit: A practical readiness check before you invest

Before launching another pilot project, companies should conduct a structured AI audit. This is not about buying software — it is about evaluating organizational readiness.

An effective AI audit checklist typically covers five dimensions:

- Business alignment

- Is the problem clearly defined?

- Is there a measurable KPI tied to revenue, cost, or risk?

- Is executive ownership assigned?

The initiative must be anchored in a clearly defined business problem with measurable performance indicators tied to revenue growth, cost reduction, operational efficiency, or risk mitigation. Executive ownership and accountability should be formally assigned to ensure strategic continuity and funding protection.

- Data readiness

- Do you have sufficient historical data?

- Is the data accessible and legally compliant?

- What percentage of data is structured and usable?

AI performance depends on the availability, quality, and accessibility of historical data. Organizations must assess whether sufficient structured and usable data exists, whether it is legally compliant, and whether governance mechanisms are in place to maintain data integrity over time.

- Technical infrastructure

- Can your current systems integrate with AI models?

- Are cloud costs estimated realistically?

- Is cybersecurity risk assessed?

Legacy systems, cloud capacity, cybersecurity controls, and integration architecture must be evaluated for compatibility with AI workloads. Cost projections for compute, storage, model retraining, and scaling should be realistic and incorporated into total cost of ownership calculations.

- Governance and risk management

- Who is responsible for model output?

- Is there a human-in-the-loop process?

- Are compliance and legal risks reviewed?

Clear responsibility for model outputs must be established. Human oversight mechanisms, compliance reviews, security protocols, and ethical guardrails should be formalized before deployment. Without defined accountability structures, AI initiatives introduce legal and reputational risk.

- Organizational readiness

- Are employees trained?

- Is change management planned?

- Is there cross-functional collaboration?

Successful implementation requires trained personnel, cross-functional collaboration, and structured change management. Resistance, skill gaps, and misaligned incentives often undermine otherwise sound technical solutions.

An AI audit does not eliminate uncertainty. However, it significantly reduces strategic blind spots. In many cases, organizations discover that the primary constraint is not algorithmic performance, but fragmented data ecosystems, unclear ownership, or unrealistic financial expectations.

Conclusion

Artificial intelligence is not a magic pill or a "make it perfect" button. It is a complex tool that requires attention. Companies that stop believing in miracles and start methodically building processes, preparing data, and training their people will inevitably find themselves among the lucky 5–20% whose business truly reached a new level thanks to AI.

The main risk today is not a neural network error, but inaction while your competitors learn to manage it.